Behind “EXHIBIT”: Reimagining German Expressionist Cinema Through AI Filmmaking

Mar 2026

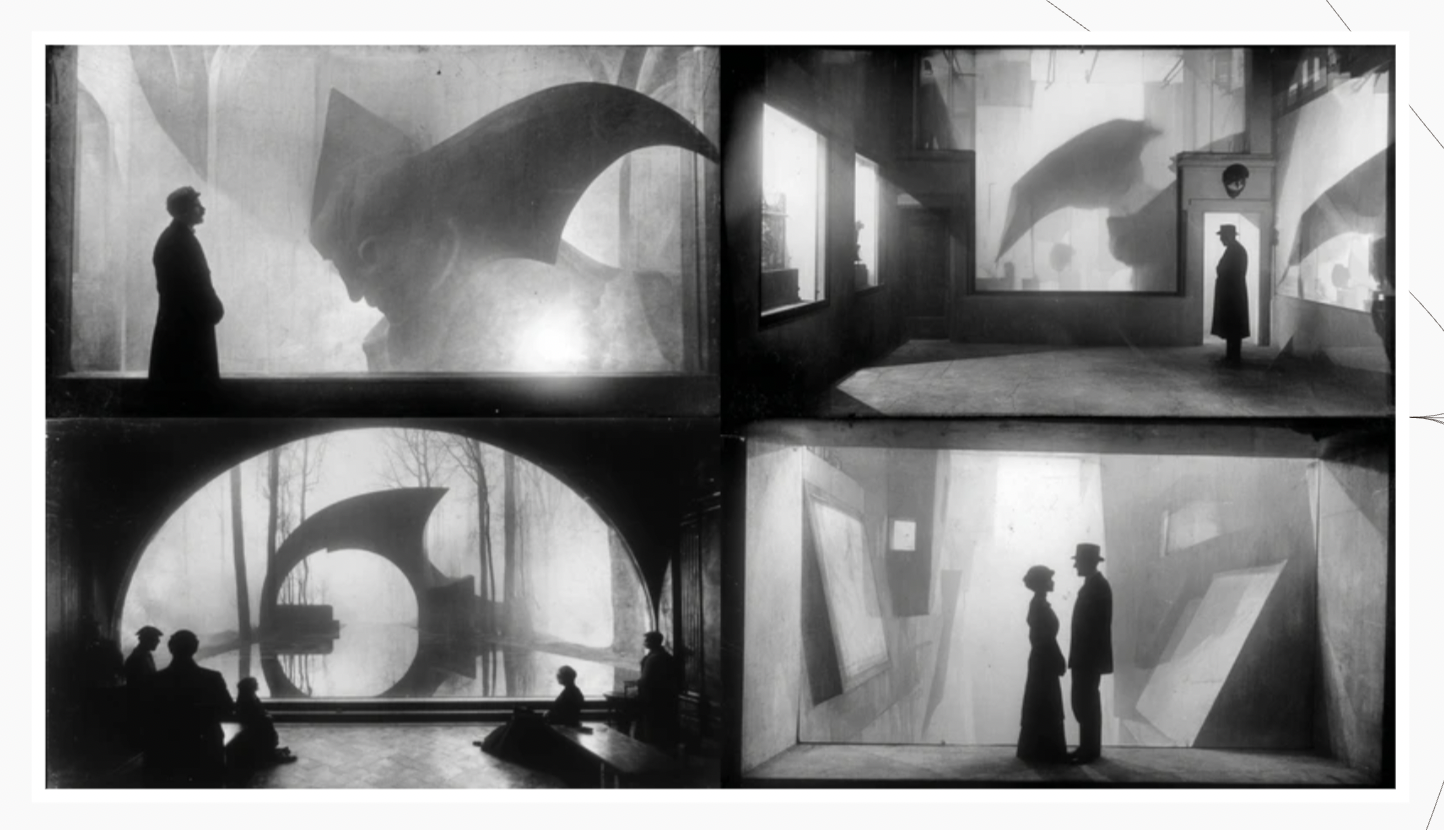

EXHIBIT is an AI-generated short film that presents a mysterious, dreamlike, and absurd story about a museum guard during his night shift, rendered in the style of German Expressionism. German Expressionist cinema served as the primary inspiration for this project.

I began with in-depth research into German Expressionist film, examining its visual aesthetics, narrative structures, and editing techniques. Based on this research, I developed an initial screenplay draft and a brief storyboard in my notebook. For the plot development, I focused on three key elements: an eye-catching incident at the beginning of the film to immediately capture the audience's attention; an unexpected twist at the end that drives the story to its climax; and a carefully constructed narrative buildup that bridges the beginning and the ending. This middle progression creates subtle illusions and misdirection, making the final revelation more surprising and impactful. I also collected visual references from several iconic German Expressionist films, compiling both scene and character references into a folder. With this conceptual and visual groundwork in place, I proceeded to Flick Art to begin the AI-driven development of the film.

The first step in Flick Art was uploading the visual references I gathered during the preparation stage into My Styles using the Explore Styles feature, in order to build a consistent German Expressionist style for image generation. It was important to include references for both environments and close-up character shots to improve the accuracy and controllability of the later generations.

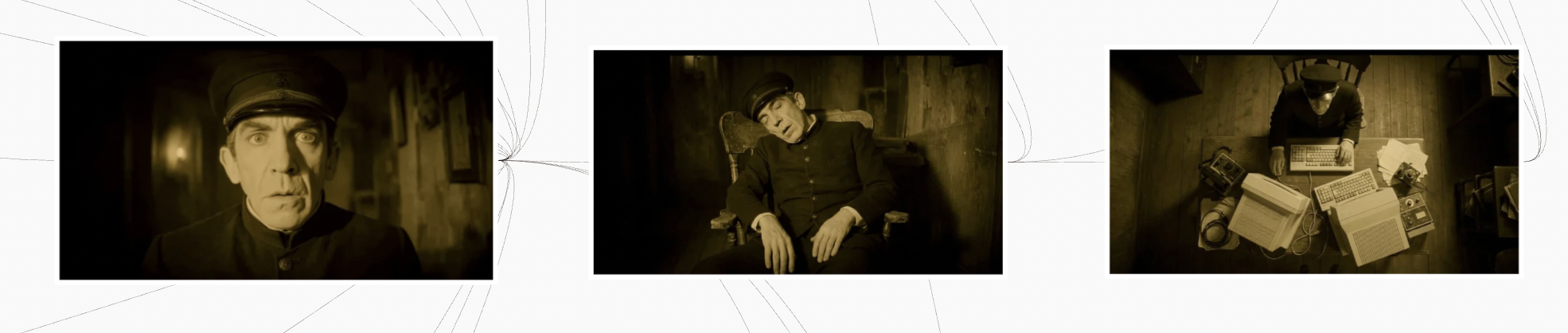

With the customized style established, I was able to begin the AI generation process. I started with character generation, as the main character, the museum guard, serves as a central thread that connects and drives the narrative. At this stage, I chose the Midjourney model for the first round of image generation, and then used Nano Banana Pro for image editing, as this model offers higher precision when adjusting specific visual details. I also used ChatGPT to help refine and expand the character description, including details such as appearance, age, and clothing. This allowed me to create more detailed prompts, resulting in image generations that felt more realistic and visually vivid.

Once I generated a character image that I was satisfied with, I moved on to the next stage: either generating videos directly from the image or adapting it into different but related scenes featuring the same character. This process allowed me to build a cohesive set of character-driven visuals, develop richer footage, and gradually construct the narrative through these evolving scenes.

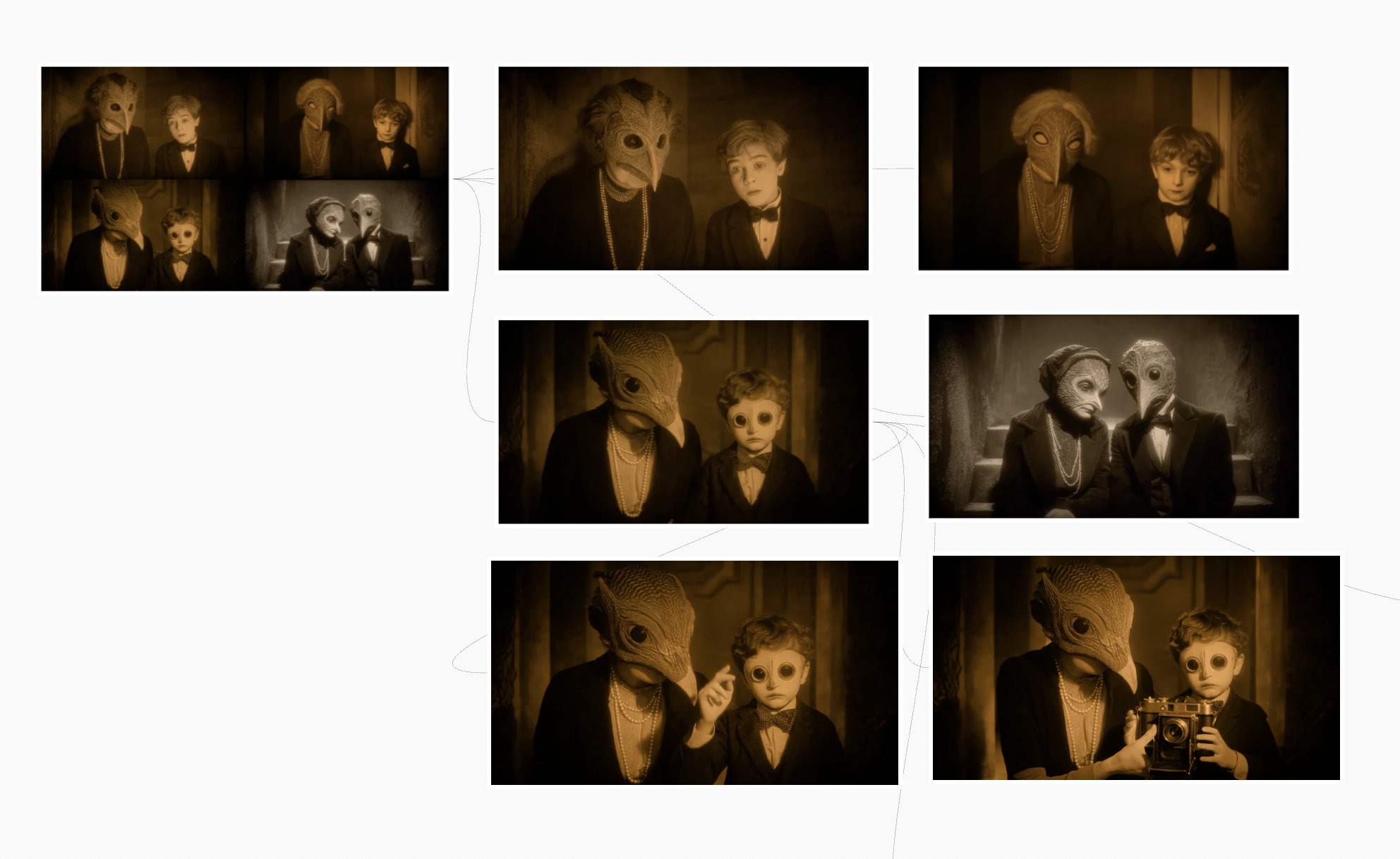

The same structured workflow was later extended to the development of all supporting characters, ensuring stylistic consistency throughout the film.

Next, I moved on to environmental scene generation, which included the museum exterior as well as interior spaces such as the lobby and gallery. For this stage, I selected the styles that contained architectural references from the customized style library to guide the visual consistency and accuracy of the scenes.

There were two key techniques used in this phase. First, once I generated an environmental image that I was satisfied with, I could either create generative videos to showcase the space through camera movement, such as slow zoom-ins, or integrate previously generated characters into the empty environment. To insert characters into a scene, I used the environmental image as the base for editing the image and added the character image as a secondary reference. Through image references and prompt guidance in Nano Banana Pro, I was able to control how the character was placed within the space. This approach allowed me to merge character and environment generation into a unified visual pipeline, creating cohesive scenes that could later be used for video generation.

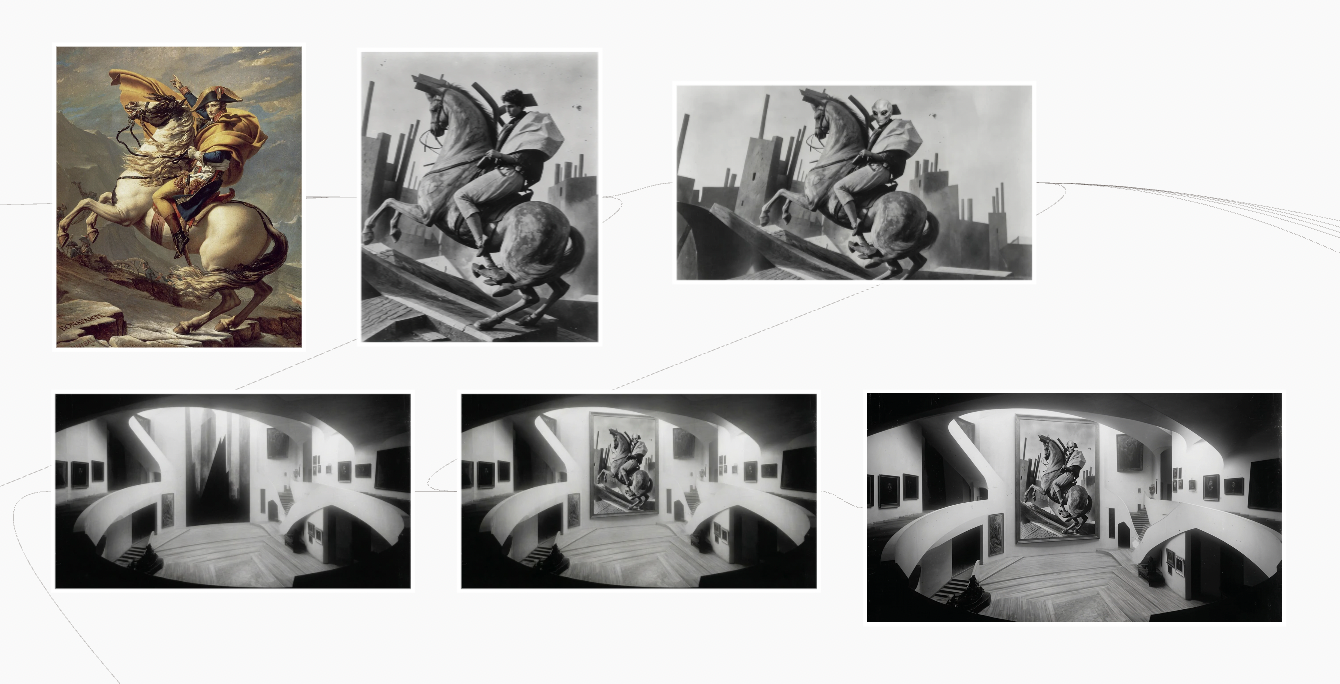

The second technique is useful when you want to reference a specific image during scene generation. For example, in my case, I wanted to include a painting inspired by Napoleon Crossing the Alps within the generated gallery space. To achieve this, I first uploaded the original reference image into Flick Art and used the Flick Advanced model to transform its visual style into German Expressionism. I then used Nano Banana Pro for further editing, integrating the stylized painting into the previously generated gallery environment. This method allowed me to incorporate recognizable visual references while maintaining stylistic consistency within the generated scene.

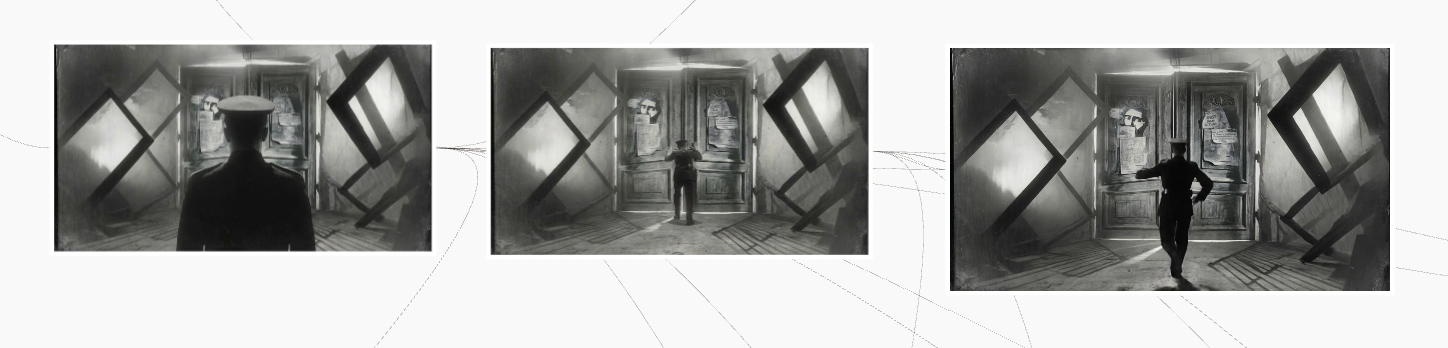

For video generation, beyond using a single image as the starting frame and guiding motion through prompts, another approach is to generate two images to serve as the start and end frames. Video generation can then interpolate between these frames, creating controlled motion based on the desired action. In one shot I wanted to generate, the museum guard runs toward a door and places both hands on it as if trying to open it. To achieve this movement accurately, I first generated a half-body close-up of the guard standing in front of the door, followed by a second image showing his full body in the act of pushing against it. Using these two images as the start and end frames, I then applied the King O3 Pro model to generate the motion of him running toward the door. This approach provided greater control over both movement and composition compared to single-frame video generation.

Creating EXHIBIT was not just an experiment in AI filmmaking, but an exploration of how emerging tools can reinterpret historical film movements through a contemporary lens. By combining research-driven visual development with an iterative AI workflow, I was able to translate the emotional intensity and visual language of German Expressionism into a new, generative medium. This process also reshaped how I think about filmmaking. Instead of a linear pipeline, AI tools enabled a more fluid and modular approach, where characters, environments, and motion could be developed independently and later unified into a cohesive narrative. The ability to rapidly iterate between still images and video allowed for both creative experimentation and precise control.

Ultimately, EXHIBIT represents a dialogue between past and future: a classic cinematic style reimagined through artificial intelligence. As generative tools continue to evolve, I'm excited to further explore how AI can expand visual storytelling: not as a replacement for creativity, but as a new language for it.